Three Self-Healing Patches in One Day, All the Same Shape

Three pipeline gaps surfaced in a single afternoon. Each had been silent for weeks. Each was the same shape.

Quick Take

- The Full Pipeline silently ignored 8 articles that had no hero images

- The WebP converter bailed early on “no PNGs” and skipped the frontmatter-update pass that would have fixed it

- The tag vocabulary drifted because nothing enforced it on build

- The fix for all three: detect-on-every-run, automatic-or-loud-block, idempotent

If you have a content pipeline that has been running for more than a month

Audit yours for the same three classes of silent failure today:

- Existing-content blindspot. Does your pipeline scan the corpus on every run, or only act on new items pulled from the source? Run

findagainst your content directory and grep for a frontmatter field your pipeline is supposed to maintain. Count the misses.- Phase-early-exit gaps. Do any of your pipeline stages

returnon “nothing to do” before reaching downstream side-effects (frontmatter rewrites, KB regeneration, sitemap updates)? Walk every stage’s exit paths.- Unenforced invariants. Does your tag vocabulary, schema, or naming convention have a build-time validator, or just a wiki page nobody reads? If only the wiki, you have already drifted.

The three patches below show what fixing each looks like. They are 60-120 lines of Python total. Block out 90 minutes.

Gap 1: pipeline only processed new articles

update_blog_from_gitea.py pulls fresh docs from Gitea, redacts via Mistral, writes to src/content/blog/, queues image-prompts in pending_images.json, swaps to ComfyUI for FLUX-1, builds, pushes. The classic content-pipeline shape.

What it never did: scan existing articles for missing hero images. After 14 article-additions over five weeks, 8 articles had no heroImage: in their frontmatter and no public/images/blog/<slug>/hero.webp on disk. They rendered with placeholder pills like [setup] or [fix] in the article-card image-slot. They published. They ranked. They looked broken.

I only noticed when an MCP-stats audit pointed at heroless articles as a discovery-quality risk. By then the gap was a month old.

The fix is a 30-line helper that runs in the existing Phase 1, right before save_pending_images():

def _scan_heroless_articles(blog_dir, config) -> list[dict]:

"""Scan src/content/blog/*.md for files without heroImage frontmatter."""

entries = []

for md_file in sorted((blog_dir / "src/content/blog").glob("*.md")):

text = md_file.read_text(encoding="utf-8")

fm = _parse_simple_frontmatter(text)

if fm.get("heroImage"):

continue

slug = md_file.stem

try:

prompts = generate_image_prompts_with_mistral(

title=fm.get("title", slug),

description=fm.get("description", ""),

image_types=["hero", "caricature"],

config=config,

style="smart_infotainment",

)

except Exception:

prompts = {}

# Emit one entry per image_type, matching generate_blog_images.py schema:

for img_type in ("hero", "caricature"):

entries.append({

"slug": slug,

"image_type": img_type,

"prompt": prompts.get(img_type) or _fallback_prompt(slug, img_type),

"output_path": f"public/images/blog/{slug}/{img_type}.webp",

"source": "backfill-heroless",

})

return entriesThen in the main flow:

heroless_entries = _scan_heroless_articles(BLOG_DIR, config)

combined = existing_pending + new_gitea_items + heroless_entries

# Dedupe by (slug, image_type), last-write-wins:

seen, deduped = set(), []

for entry in reversed(combined):

key = (entry["slug"], entry["image_type"])

if key not in seen:

seen.add(key)

deduped.append(entry)

save_pending_images(list(reversed(deduped)))Cost: about 5 seconds per heroless article (Mistral prompt-generation is the slow part). My run detected 8, added 16 entries to the queue, then handed off to ComfyUI for FLUX-1. The Full Pipeline now self-heals on every run.

Gap 2: convert_images_to_webp.py bailed early

The first Backfill-Heroes run produced 16 images. ComfyUI generated them as PNGs, then convert_images_to_webp.py converted to WebP, then the pipeline built and pushed. The commit message said feat(blog): backfill 16 hero image(s). Looked perfect.

The site still rendered placeholder pills. Eight articles. No hero images.

The cause was in two parts. First, the WebP converter:

def convert_all(dry_run: bool) -> None:

pngs = sorted(IMAGES_DIR.rglob("*.webp"))

if not pngs:

print("No PNG files found.")

return # <-- early exit, skips everything below

# ... PNG conversion ...

# ... frontmatter update (rewrites .webp to .webp in heroImage:) ...When the script ran a second time after the PNGs had already been converted and unlinked, it correctly reported “No PNG files found.” But that early exit also skipped the frontmatter-update pass.

Second, the frontmatter-update pass was a string-replace, not an inserter:

new_text = text.replace(".webp", ".webp")For articles with an existing heroImage: "...hero.webp", this rewrote to .webp cleanly. For articles with no heroImage: line at all (the eight we just generated images for), there was nothing to rewrite. The frontmatter stayed empty. The site never learned about the images.

The fix splits the function into two passes that always run:

def convert_all(dry_run: bool) -> None:

pngs = sorted(IMAGES_DIR.rglob("*.webp"))

if not pngs:

print("No PNG files to convert — running frontmatter self-heal pass only.")

_update_frontmatter([], dry_run)

return

# ... PNG conversion ...

_update_frontmatter(converted, dry_run)And the frontmatter pass now does two things, not one:

def _update_frontmatter(converted: list, dry_run: bool) -> None:

PUBLIC_IMAGES = ROOT / "public" / "images" / "blog"

for md in sorted(BLOG_DIR.glob("*.md")):

text = md.read_text(encoding="utf-8")

new_text = text.replace(".webp", ".webp") # pass 1: rewrite existing

if "heroImage:" not in new_text:

slug = md.stem

hero_path = PUBLIC_IMAGES / slug / "hero.webp"

if hero_path.exists() and new_text.startswith("---"):

fm_end = new_text.find("\n---", 3)

if fm_end > 0:

inject = f'\nheroImage: "/images/blog/{slug}/hero.webp"'

new_text = new_text[:fm_end] + inject + new_text[fm_end:]

# pass 2: insert if missing AND image exists on disk

if new_text != text:

md.write_text(new_text, encoding="utf-8")Now it runs every time. If an article has no heroImage: and a matching hero.webp exists on disk, the frontmatter gets the field injected. The reverse case (orphan frontmatter pointing at non-existent image) is left alone. That is a different bug class and needs different handling.

Gap 3: tag vocabulary drifted silently

Earlier the same day I migrated the blog’s tags from a sprawling 19-tag set (including smithery, perf, performance, fixes-vs-fix plural drift, three singleton experiments) to a closed vocabulary of 4 content-types plus 18 topics. The drift had grown over months because nothing enforced the vocabulary on build.

I expected it to drift back the moment Mistral generated a new article and decided that flashy-ai was a good tag.

The fix is a 60-line validator:

def main() -> int:

vocab = yaml.safe_load(VOCAB_PATH.read_text())

allowed = set(vocab.get("content_types", {}).keys())

for cluster in vocab.get("topics", {}).values():

allowed.update(cluster.keys())

violations = []

for md in sorted(BLOG_DIR.glob("*.md")):

tags = parse_tags(md.read_text())

bad = [t for t in tags if t not in allowed]

if bad:

violations.append((md.stem, bad))

if not violations:

print(f" ✅ {sum(1 for _ in BLOG_DIR.glob('*.md'))} articles — all tags conform")

return 0

print(f" ❌ {len(violations)} articles with tag-drift:")

for slug, bad in violations:

print(f" {slug}: {', '.join(bad)}")

print(f" Allowed tags ({len(allowed)}): {', '.join(sorted(allowed))}")

return 1It runs as Phase 7 prep in both blog-full-pipeline.sh and blog-backfill-heroes.sh, immediately before npm run build:

echo " 🛡 Tag-Vocabulary-Validator..."

python3 scripts/validate_tags.py || { echo " ❌ Tag-Drift detected — build aborted."; exit 1; }

npm run buildThe next time Mistral fabricates a tag, the pipeline crashes loudly with the slug, the bad tag, and the allowed list. Either I add the tag to the vocabulary (deliberate choice) or fix the article (Mistral was wrong). What I cannot do anymore is ship it.

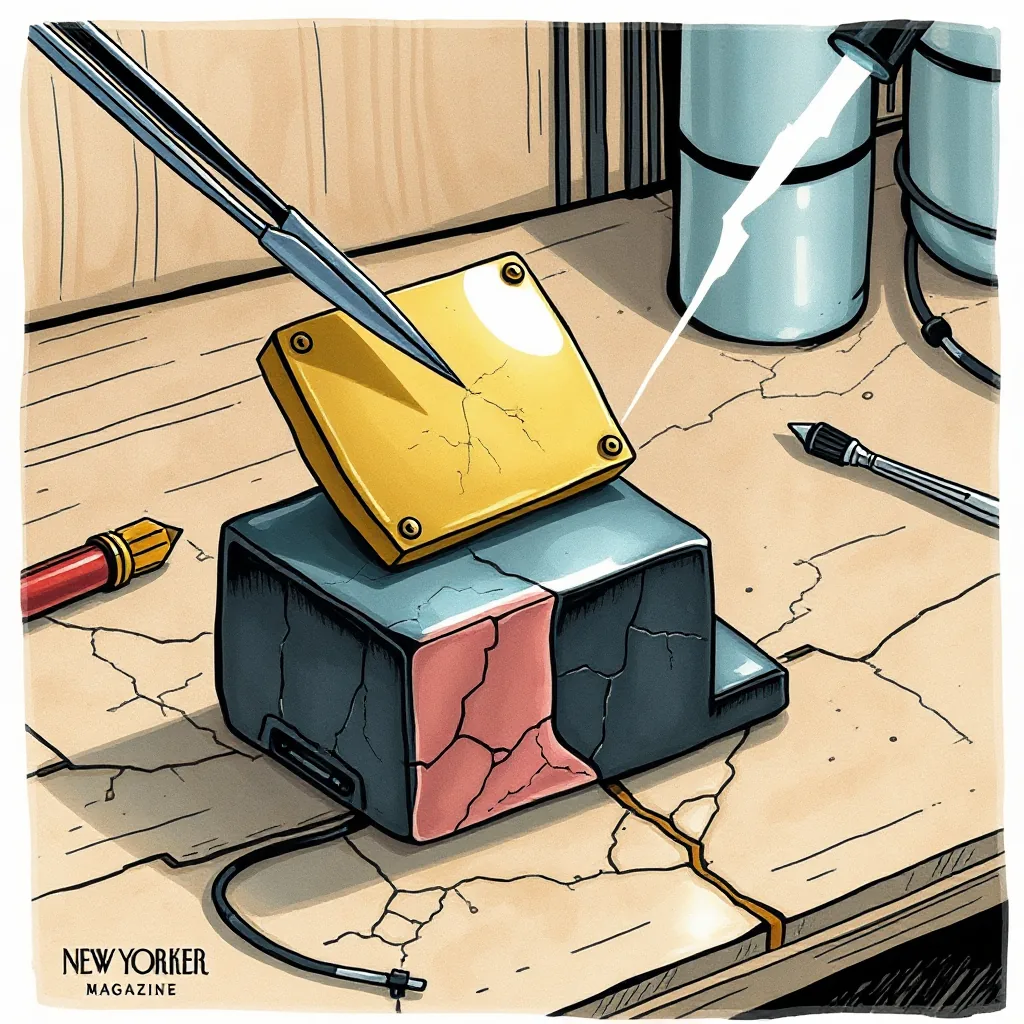

The shared shape

All three patches do the same three things:

-

Detect on every run, not only on changes. The Full Pipeline used to only act on new Gitea pulls. Now it scans the entire content directory for invariant violations every time. Cost is small. Coverage is total.

-

Auto-fix when the fix is unambiguous. Block loudly otherwise. Heroless articles get prompts generated and queued. No judgment call there. Missing-

heroImage:for articles with a matchinghero.webpon disk gets the frontmatter injected. No judgment call there either. A tag outside the vocabulary needs a human decision (extend vocabulary, or fix article), so block the build. -

Idempotent everywhere. Re-running the heroless scan on a clean state finds zero articles and queues nothing. Re-running the WebP converter on already-converted images finds zero PNGs and exits the frontmatter pass cleanly. Re-running the tag validator on a clean state exits zero. No state-bombs, no double-processing.

This is the same shape as the four-check backup-verify pattern in the postmortem one slot above this article in the blog. Different domain (backup verification vs content pipeline), same discipline: run the check every time, believe the check more than the timer, fix it or fail loud.

The pipeline that catches its own omissions every run is the only kind of pipeline that earns the word “pipeline”. Anything less is hope dressed as automation.