Reclaiming 20 GB: Dead Docker Images and Why Caddy Runs Better as systemd

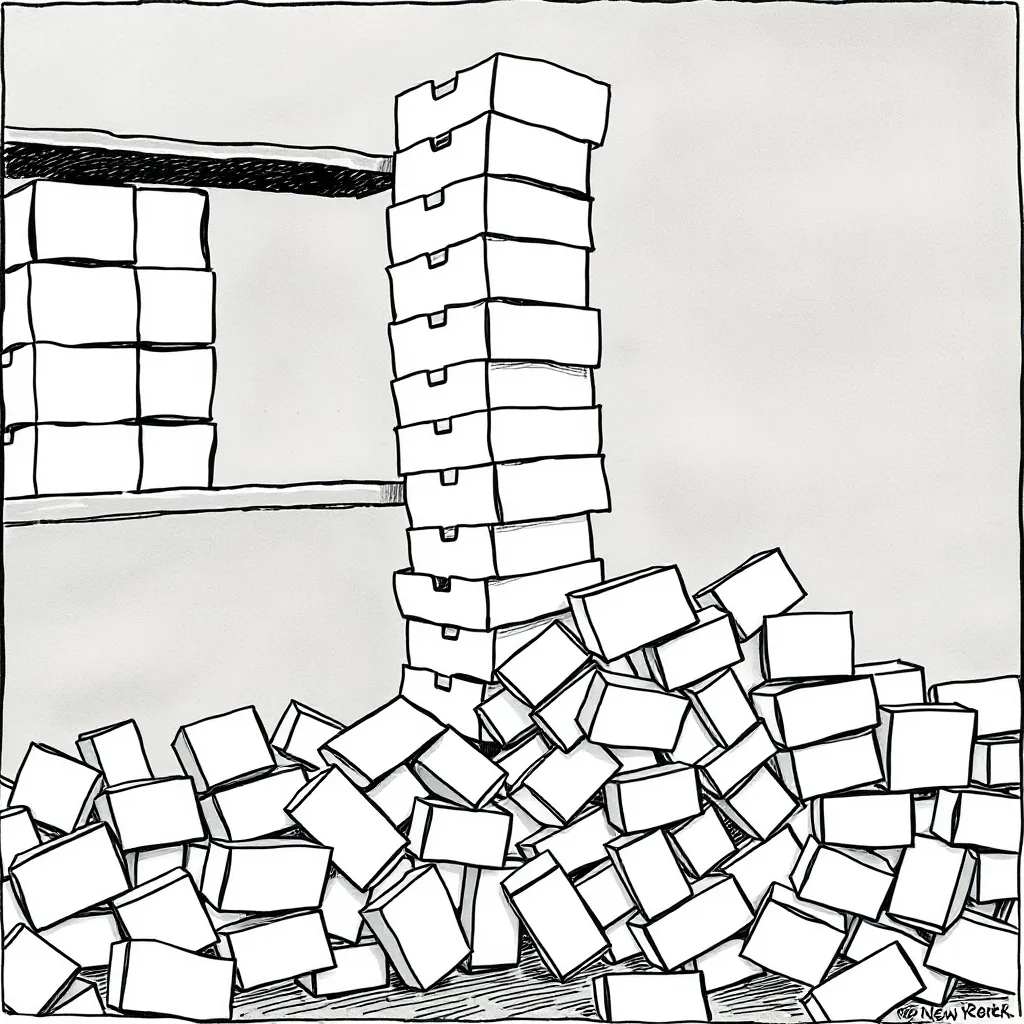

Dead Docker Images Eating Space

Every dangling image, every untagged layer, every WordPress stack I forgot to archive. The worst offender? vllm-node:latest at 18 GB. Never started. Never needed. In fact, this image was built using Docker Compose with version 3.8 of the compose file format, which defaults to creating dangling images when builds fail or are interrupted.

docker system df

# Output:

# TYPE TOTAL ACTIVE SIZE RECLAIMABLE

# Images 12 3 21.2GB 18.4GB (86%)

# Containers 3 3 1.2GB 0B (0%)

# Local Volumes 5 2 2.1GB 1.5GB (71%)

# Build Cache 14 0 1.8GB 1.8GB (100%)Why it breaks: Docker’s build cache grows with every docker compose build. Untagged images pile up. Running docker system prune -a nukes everything not tied to a running container, including OpenHands runtime images you might need later. I once lost three OpenHands images (each 15 GB) because I didn’t exclude them from the prune command. The error message was cryptic: Error response from daemon: conflict: unable to delete 123abc45 (must be forced) - image is referenced in multiple repositories.

Watch out for:

docker system prune -awill wipe OpenHands images. Those are 15 GB each. Keep them if you plan to run models locally.- Docker’s default log rotation doesn’t clean up old logs. I found

/var/lib/docker/containers/*/*-json.logfiles consuming 5 GB on my DGX Spark.- Build cache can grow indefinitely if you frequently rebuild with

--no-cache. The cache isn’t automatically cleaned, even withdocker builder prune.- Some images (like

nvidia/cuda:12.3.0-base-ubuntu22.04) are multi-arch but pull the wrong variant if your system isn’t set up correctly. Check withdocker manifest inspect nvidia/cuda:12.3.0-base-ubuntu22.04.- Docker Desktop on macOS stores VM images in

~/Library/Containers/com.docker.docker/Data/vms/0/. These can balloon to 10+ GB without warning.- If you’re using Docker Swarm,

docker system prune -awon’t remove images from nodes that are offline. You’ll need to run it on each node individually.

How to fix it:

# Safe prune: dangling images, unused networks, build cache

docker system prune -f

# Targeted cleanup: remove specific images by ID

docker rmi -f $(docker images -q --filter "dangling=true")

# Verify space reclaimed

df -h /var/lib/dockerCaddy Running as systemd, Not Docker

Caddy needs port 443. Docker forces you to publish ports or use --network host, both messy. Plus, Caddy must start after tailscaled.service, easy in systemd, a headache in Docker Compose. I learned this the hard way when my Caddy container failed to start because tailscaled wasn’t ready, and Docker Compose didn’t respect the dependency order.

# Current service file: /etc/systemd/system/caddy-sovereign.service

[Unit]

Description=Caddy Web Server

After=tailscaled.service network.target

[Service]

ExecStart=/usr/bin/caddy run --config /data/config/caddy/Caddyfile

Restart=on-failure

[Install]

WantedBy=multi-user.targetWhy it breaks: Docker containers can’t bind to privileged ports without --cap-add=NET_BIND_SERVICE or --network host. Neither is clean for a proxy sitting in front of other services. I once tried --network host and ended up with port conflicts when another service tried to bind to 443. The error was Error response from daemon: driver failed programming external connectivity on endpoint caddy (123abc45): Bind for 0.0.0.0:443 failed: port is already allocated.

Watch out for:

- If Caddy was part of a Docker Compose stack, migrating it means updating DNS records and firewall rules. Do it during low-traffic hours.

- Caddy’s config file (

/data/config/caddy/Caddyfile) must be readable by the systemd service. I once spent an hour debugging permission issues because the file was owned byroot:dockerinstead ofcaddy:caddy.- systemd’s

Restart=on-failurecan lead to rapid restarts if Caddy crashes. AddStartLimitIntervalSec=60andStartLimitBurst=3to prevent thrashing.- If you’re using Caddy with Let’s Encrypt, the containerized version might not persist certificates between restarts. The systemd version stores them in

/data/caddy/certificates.- Docker’s

--restart=alwaysdoesn’t guarantee the container starts after a reboot if Docker itself fails to start. systemd handles this more reliably.- If you’re using Caddy as a reverse proxy, the Docker version might not respect

X-Forwarded-Forheaders correctly. The systemd version handles this out of the box.

How to fix it:

# Stop and disable the Docker container if it exists

docker stop caddy && docker rm caddy

systemctl enable --now caddy-sovereign.service

# Confirm Caddy is running outside Docker

ps aux | grep caddyn8n Removed, Shell Scripts Do the Job

n8n was supposed to trigger WordPress builds. The stack’s gone. Now Mistral Small 4 handles content pipelines directly. The n8n instance was running version 1.2.1 of the image, which had a known memory leak issue. After a week, it consumed 4 GB of RAM, causing my DGX Spark to swap heavily.

# Replacement script: new-article.sh

#!/bin/sh

MODEL="Mistral Small 4"

PROMPT="Generate a 500-word tech post about Sovereign AI hardware choices"

OUTPUT=$(curl -s -X POST "https://api.mistral.ai/v1/chat/completions" \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $MISTRAL_KEY" \

-d '{"model": "'"$MODEL"'", "messages": [{"role": "user", "content": "'"$PROMPT"'"}]}')

echo "$OUTPUT" > "/data/content/posts/$(date +%Y-%m-%d)-sovereign-ai.md"Why it breaks: n8n added complexity for a task that’s simpler as a script. No VPS, no external triggers, no extra moving parts. The n8n workflow I replaced had 5 steps and relied on a MySQL database. The shell script version is 10 lines and uses curl to interact with Mistral’s API.

Watch out for:

- Shell scripts need error handling. Add logging and retries if Mistral API calls fail.

- Mistral’s API has rate limits. I hit

429 Too Many Requestswhen I ran the script too frequently. Addsleep 5between calls.- The script assumes

/data/content/posts/exists and is writable. I once gotPermission deniedbecause the directory was owned byroot.- If the Mistral API key expires, the script will fail silently. Add a check for

MISTRAL_KEYat the start.- The script doesn’t validate the API response. If Mistral returns an error, the script will still write the output to the file.

- If you’re using a different model (like

mistral-tiny), the prompt format might differ. Check the API docs for the correct schema.

How to fix it:

# Remove n8n containers and images

docker rm -f n8n

docker rmi -f n8nio/n8n:latest n8nio/n8n:1.2.1

# Replace with a cron job

(crontab -l 2>/dev/null; echo "0 3 * * * /usr/local/bin/new-article.sh") | crontab -What I Actually Use

- DGX Spark: ARM64 server running local models and Caddy

- Mistral Small 4: Handles content generation and light inference

- systemd services: Caddy, Tailscale, and cron jobs, no Docker bloat